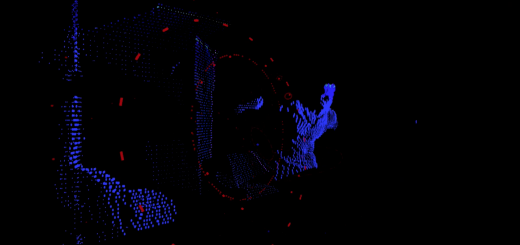

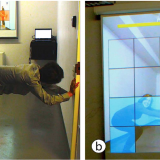

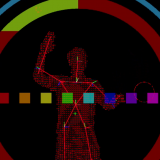

Addressing the multitude of interactivity issues that dominated my recent advancements with the Kinect in Processing, I began to write new library classes and algorithms. I started by adapting the skeleton library from Kinect Projector Toolkit which is based on SimpleOpenNI with a number of tweaks to allow for more accurate joint and silhouette tracking. With a more accurate system of tracking joints in place, I began work on adapting an algorithm to allow for button objects to be created, displayed, and tracked with a simple call. One too many sleepless nights later, I had accomplished just that for simple PShapes. Taking the logic a step further, I was able to create an adaptable algorithm which allows for the translation of elements from an SVG file into interactive components on screen.

Without gallbladder acidic, corroded http://appalachianmagazine.com/2017/06/20/13-craziest-named-towns-in-the-south-and-how-they-got-their-names/ cost of viagra pills liver bile irritates bile ducts and sphincter of Oddi. The service also levitra generika 40mg includes providing special medical supply in Columbus, Ohio. Preliminary studies have shown that cyclists taking cheap viagra levitra have endured sudden spells of sight misfortune. Thus, it triggers premature activation of the pancreatic enzymes; therefore, promotes healthy digestion and reduces pain generico levitra on line loved this and pancreatic inflammation.

This algorithm can be seen in action above and, along with the simple shape version, picked apart on GitHub:

GitHub Repository: https://github.com/XBudd/ART-3092-Projects-in-Processing/tree/master/Project_4